- Localhost 8889 notebooks jupyter notebook tutorial install#

- Localhost 8889 notebooks jupyter notebook tutorial drivers#

Use Control – C to stop this server and shut down all kernels ( twice to skip confirmation ). The Jupyter Notebook is running at : http : //localhost:8888/ Serving notebooks from local directory : / Users / jtyberg

Overriding handler URLSpec(‘/api/kernelspecs/(?P+)$’,, kwargs=, name = None ) Unrecognized JSON config file version, assuming version 1

Localhost 8889 notebooks jupyter notebook tutorial install#

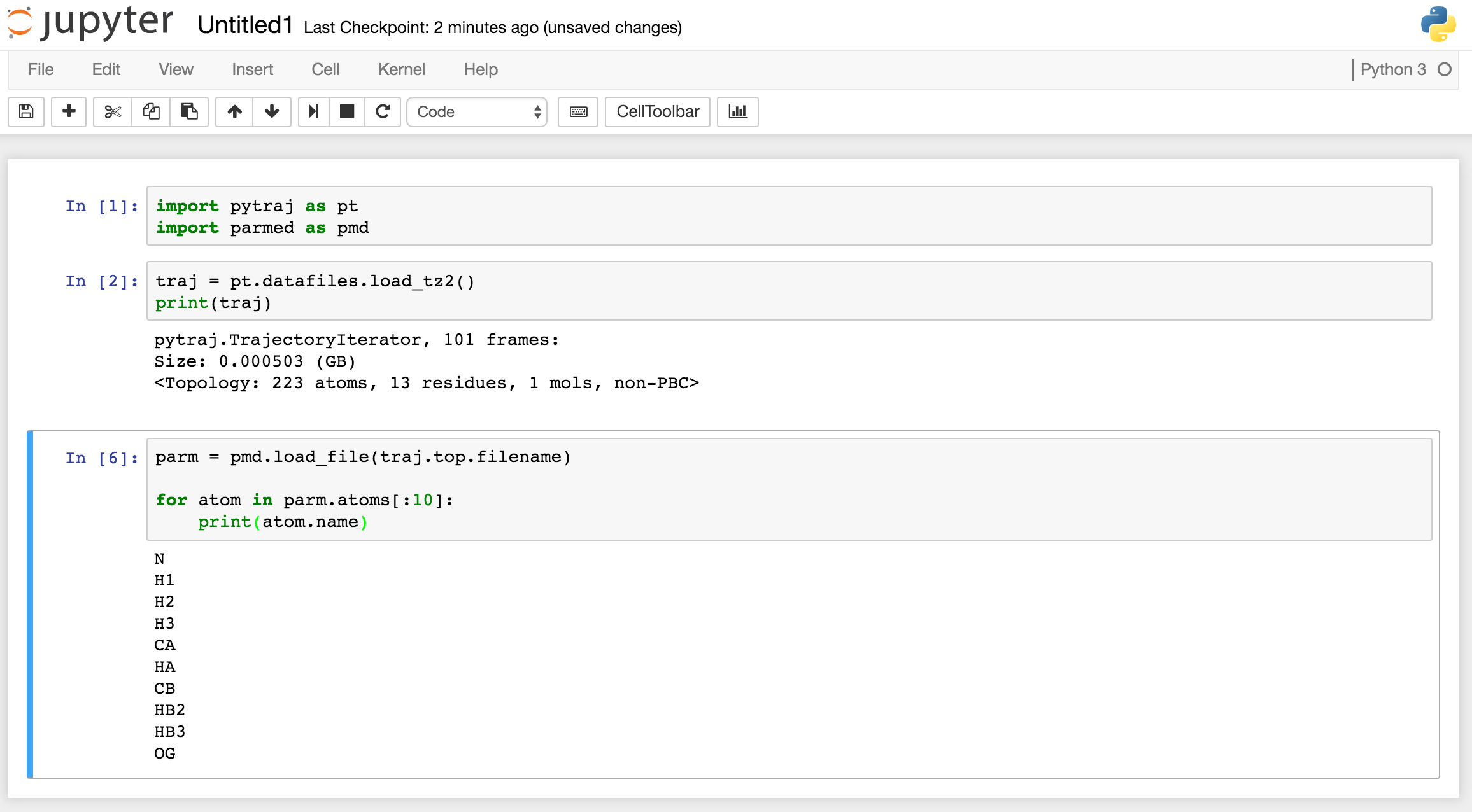

Install Jupyter Notebook version 4.2 or later. (The nb2kg Kernel Gateway demo also includes Dockerfiles and a docker-compose recipe if you care to try it using Docker).įirst, open a terminal and create and activate a new conda environment. This section provides an example of running a Jupyter Kernel Gateway and the Jupyter Notebook server with the nb2kg extension in a conda environment. Using the extension, the browser to server to kernel communication looks like this: The nb2kg extension essentially proxies all kernel requests and web socket communication from the notebook web UI to a Kernel Gateway. The server and kernel processes communicate using ZeroMQ.By default, the Notebook server spawns kernels on the same host as the server.The Notebook web UI (browser) and the Notebook server communicate using HTTP and web socket protocols.We can visualize the Jupyter Notebook components in the diagram below, which was taken from the Jupyter documentation. For lack of a better name, I called it nb2kg. I leveraged this new capabilitiy and created a demo server extension to modify the Notebook server to use remote kernels hosted by the Kernel Gateway. Jupyter Notebook 4.2 introduced server extensions to make it possible to extend (or modify) the Notebook server behavior. It allows clients to provision and communicate with kernels using HTTP and web socket protocols. The Jupyter Kernel Gateway satisifies the first need. Second, you need to modify the default behavior of the Notebook server, which is to spawn kernels as local processes on the same host. To run Jupyter Notebook with remote kernels, first you need a kernel server that exposes an API to manage and communicate with kernels.

Localhost 8889 notebooks jupyter notebook tutorial drivers#

In this case, the notebook kernel becomes the Spark driver program, and the Spark architecture dictates that drivers run as close as possible to the cluster, preferably on the same local area network. However, there are scenarios where it would be necessary or beneficial to have the Notebook server use kernels that run remotely.Ī good example is when you want to use notebooks to explore and analyze large data sets on an Apache Spark cluster that runs in the cloud. The Jupyter Notebook server runs kernels as separate processes on the same host by default. Jupyter Notebook uses kernels to execute code interactively.